Showcase of the 2015 participation of 2 teams and some of the strategies used for fire fighting contests in Portugal.

Things used in this project

Hardware components

Hand tools and fabrication machines

utility knife

Pliers

3D Printer (generic)

Story

Luis Electronic Projects

Disclaimer: This is more of a showcase and its purpose is more towards guiding people when participating this type of contests. It is not a step by step guide in how to make a robot (maybe in the future I will make one).

Introducing the Teams

So first of all, if you were expecting a humanoid, kinda like robocop, type of robot going around putting out fires then I can tell you right now you will be disappointed.

This was for a small contest here at Portugal based on the Trinity College contest. The robotics club of University of Coimbra participated with 2 teams.

The first was composed by 3 members that I was teaching to use the TM4C launchpad. The second was composed for members of the board to fill in a team that had to drop out due to exams and we decided to make the robot in a couple of days (literally). This second one was using a STM32.

I chose a STM32 instead of another Tiva because since I already helped the EquipaT with the software, including some libraries that I made for them to use on the contest. I was afraid that the judges, or other contestants, would call that a copy which is not allowed and... well... why not try out a new thing that I was gonna start working on?

Short Summary of the Objective

The contest was pretty simple. There's a simulation of a house with 1 floor. There are 4 arena possibilities (chosen at random) and the robot would have to navigate his way through the arena, find a candle and blow it out somehow. The rooms would have white lines over a, usually, black floor signaling the doorways but some colorful carpets would force you to use color sensors (instead of some binary white/black ones). There would also be some other obstacles and some bonus "challenges".

So first, the "EquipaT"

The robot MCU board was a TM4C123 launchpad with a ARM-M4F running at 80Mhz. The team used Tivaware libraries and CCS to program the board.

For navigation

We chose 2 HC-SR04.

For Sensing the White Line

We used a TCS3200/TCS230.

For the Flame Sensor

We got our hands in a UV tron but unfortunately it came not working so were using a board with a near IR photo-transistor from DF-Robot.

The Chassis

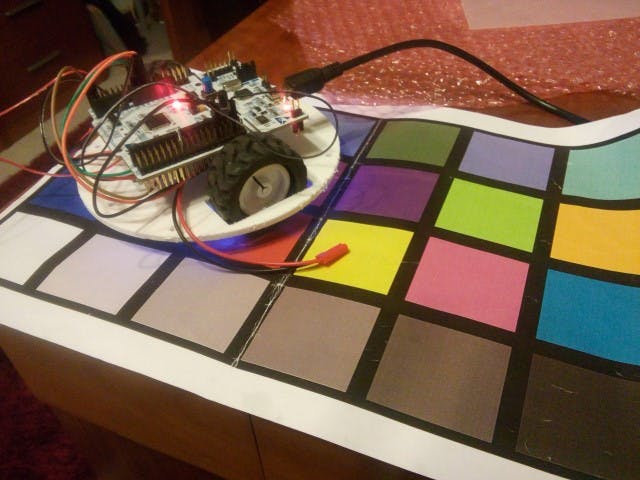

Based on a circular pololu chassis , of about 12cm. Unfortunately I found it a bit fragile with so many holes + it didn't had any good way to add the TCS3200 so we made not only the 2 upper "floors" in PVC but also the bottom one.

The Motors:

Following the pololu chasis kit we used pololu's micromotors of 100:1 (High power ones) and a DRV8833 motor driver (real handy with the reverse protection and all). The fan we used was improvised out of some ebay parts and we printed a support for it. The fan turned out to be really powerful for it's size! I believe it was fans for those really small quadcopters (that can fit in a hand).

I was particularly happy with the TCS3200, it was actually the first sensor that I got reliably identifying the white color from all the others (the rest I didn't try enough, it wasn't needed). I used a algorithm that used RGB coordinates (0-255) to find out if the color was neutral and it proved to be really reliable (and portable between RGB sensors)!

I tested also a TCS34725 with Energia and liked it although the TCS3200 seems to be able to stand further from the ground - also tried in arduino but it was a pain to get the color identifying algorithm working on it (in the end I did it, check it out). I particularly don't like much i2c (maybe just yet) so I didn't use it since the TCS3200 worked so well.

The photo-transistor circuit I wanted to improve. I didn't like how it was necessary with normal resistor+phototransistor configuration it was needed to calibrate the resistor. So instead I tried using a 555 timer to get a positive pulse dependent on the current.

The navigation could be better, both me and the team lacked control classes and navigation classes that would have helped a lot, but simple "if's" and PWM control without feedback was enough. They tried a lot to get the bot going even though all the exams they had.

"Cybertronics Team", the board team

Besides me, no one ever went to this contest, and only 1 other went to any contest so although it's the "board team" it's not particularly experienced in these contests. Nonetheless we took the challenge. I like it a lot to get the lower lever programming working I decided that we used a STM32F0. We had a STM32F072RB Nucleo board so we used that. I made also some APIs for the Tiva team and I tried to make them with a abstract side and "driver" side, so porting the APIs was pretty simple! The sensors and actuators required only timers and GPIO so even simpler! Got it working in no time, it was my first project with the STM32 (besides simple examples) and really enjoyed using the standard peripheral library.

We used pretty much same parts as the Tiva team, basically only the MCU changed.

Doing some early tests with the TCS3200

Well it was fun and considering I didn't expect to participate in this contest it went well, getting 5th place, the only thing that failed was the flame sensor. It isn't reliable so it required more time which I didn't have (2 days building+programming and 1 day making "drivers").

The Tiva team could not complete the objective. They had not enough time for their experience, exams didn't help. The next year they have to start working on the bot itself earlier, the time of the contest is really bad because of exams... But here it is a video of the 1st attempt (out of 3) of my bot with the STM32, this one the flame sensor worked well:

More Specific Info - the Code

Now how the logic worked was simple, well the contest challenge is not exactly complicated. Though, some tactics with interrupts and special hardware features we're implemented that could no be made with Arduino software, at least with the default libraries.

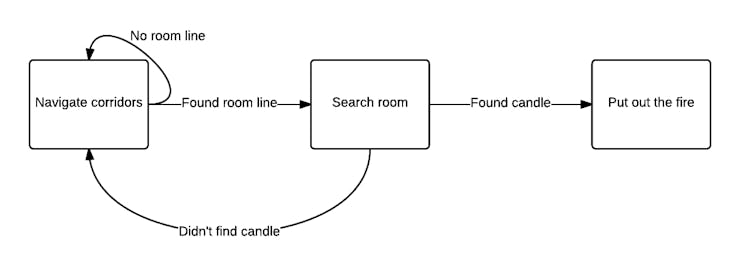

The code just was breakdown into 3 major parts. Navigating the corridors, searching the rooms and putting out the fire when it found it.pan>

Navigating the Corridors

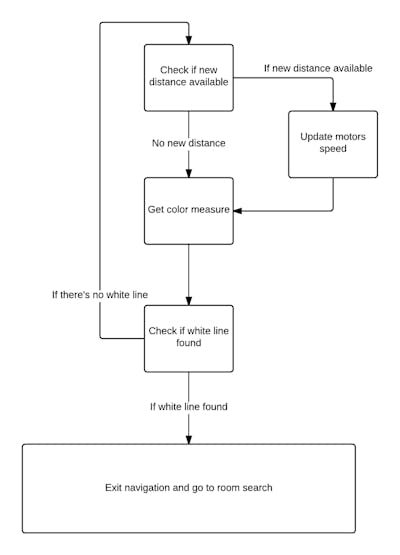

Now this is basically the running loop of the navigation code. The robot would update the motors speed depending on the distances measured (get away from the wall, get closer, etc) and would always be checking the color of the floor. This would end when a white line was detected and it would go into room search.

But if it checks if a new distance is available, what's getting the distance on the first place? pan> Well the advantage of going of working closer to hardware is that you can easily take advantage of your hardware and interrupts.

"Background Tasks"

Well, this is no OS, so we can't really say it's multitasking. But it kinda is in a way.

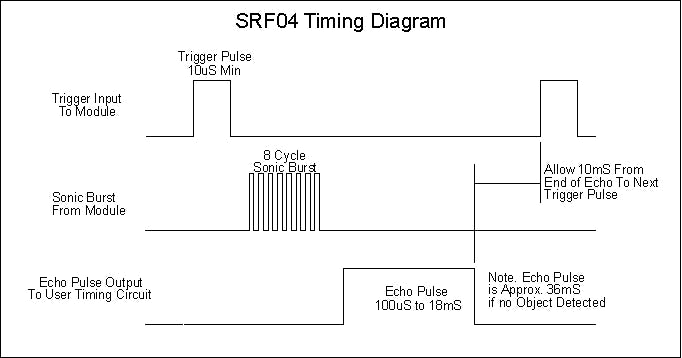

The distance sensors used we're HC-SR04, pretty much a SRF04. These sensors require a 10us (at least 10us) pulse to start a reading and then return the distance reading with a high pulse of variable width - so you need to measure that pulse width to get the distance.

pan> Now if you're using an Arduino/Energia, the most common way to use a SRF04/HC-SR04 is by:

Trigger pin highDelay 10us with delayMicroseconds function.Trigger pin lowUse pulsein function to wait and measure the pulseWell this means that

You can only read 1 sonar at a time (though I admit firing many at the same time can be a problem sometimes)Each measure can take 100uS to 18mS! 36mS if no object is detected! Well in this case the distances were in general small but I did get sometimes get about 6ms or more because of 1 meter readings and weirdly enough sometimes errors. It was actually pretty common getting the inconsistent long delays due to long distances or errors so many times. Note more, that there is milliseconds between the trigger and the echo pulse.Now this is okay for most of the time but I wanted to take advantage of the color sensor quick readings and never possibly miss a line + I wanted in the future to add constant flame scanning.

To add to that, you should only get the sensors to fire every 50ms, that was the minimum time I found to get consistent readings with more precision and without getting rouge echos. Implementing that on arduino without using delays is also possible with the use of millis, but why use that?

So the Solution?

Generate a PWM pulse with 50ms period and 10us active duty to trigger both sonars at the same time, every 50mS without fail. And without processor intervention. Capture each echo with a timer time capture input - a timer for each sonar would measure the width of the pulseEvery time the PWM cycle ended, generate a interrupt to save the measured distance in a variable and set the flag for a new distance available.

With this I would get measures every 50ms with minimum processor intervention and no unnecessary code holding. With this I was able to get the color readings with less than 1mm intervals, even with the robot at that speed, meaning that it would be very possible to had more tasks like distance traveled + gyroscopes and accelerometers and maybe constant fire scanning.

Well granted that it was not the most useful application of the method but I do intend to use a newer sensor that gives better readings but it's much slower, so this will be more important there.

Searching the Room

This was done in a very simple way - there wasn't even time to make it a bit more complicated anyway.

After the line was detected it was simple. The robot would just turn "all the way" left so it would be looking to the most left of the room and then do little steps to the right until he was all the way right. In each step he would get a reading from the photo-transistor.

Now if he detected the flame he would go put it out, if he didn't he would turn the rest to the right and exit the room.

The robot didn't consider the line on the way out. I had it simply go forward a bit after turning to the exit and hope he got over the line and then hope again that when he started the navigation code, that he would find the wall correctly. Again time was very short unfortunately.

One this that could help a lot to search the candle would be encoders so I could always turn the same every time, as it was the robot would not turn always the same. That or have the little flame sensor mounted on a servo so that the robot would not have to move.

Putting out the Fire

Well, this was also not that complicated.

The robot when making the steps to search the candle would register the step where the sensor output was highest. With this it would turn the number of steps to the left to align with the candle (without encoders it was not really that perfect).

He would go forward a bit, scan again and align again every time until the sensor output was over a certain threshold telling it was close enough to the candle. Once there it would turn on the fan and wiggle a bit for a long time until the candle was put out for sure.

Well that's that.

In the future I already have some ideas for improvements but I have to test them out. I definitely need to get a better flame sensor that's for sure and thinking of either adding a custom 3D printer box for the color sensor and a IR filter or switching to the TCS34725 though I didn't like how slow it can be at getting a good reading :/. Encoders would be nice too but not a priority.

Let's see what will come out next

This was copied from this page using hackster import tool. I made some quick revision on some of my poor grammar and phrases (they we're even worse when I wrote this), I hope it's legible.

The article was first published in hackster, June 24, 2016

cr: https://www.hackster.io/Luis_R_A/fire-fighting-robot-2015-be7437

author: Luís Afonso